There has been no official announcement or confirmation from Nvidia regarding the majority of the data presented here. We are expecting a Q3 release for the Nvidia RTX 40 series GPUs such as 4060, 4080, 4090, and their Ti variations.

BACKGROUND

The much-anticipated GeForce 30 series was announced on September 1, 2020. It came with amazing new stuff. The new Ampere architecture, better and more RT cores, hardware-accelerated ray-tracing, improved Tensor cores, better memory, and so on. The products started shipping some 16 days later. It has been nearly 550 days now. Nvidia is still milking the 30 series, releasing new and somewhat unnecessary video cards, such as the RTX 3070 Ti at $600 when they already have the much better RTX 3080 at $100 more. But it is time to talk about the 40 series now. Hopefully, it will be launching later this year.

DATA SOURCES

A recent hack on the Nvidia website has been drip-feeding some information to the larger internet regarding the RTX 40 series, whereas some reliable leaksters who have been pretty accurate with their rumors in the past have also been active lately.

We will be compiling data from news sources, media sites, and of course, reliable leak sources such as kopite7kimi and Greymon55 – not to mention the good old intuition and gravedigging research we like to equip ourselves with.

Release date

The release is most likely going to be September 2022, starting most probably with the RTX 4090.

If we take a look at the past dates, Nvidia releases a new graphics card series roughly every two years. In September ’20, they launched the RTX 30 series, and in 2018 they launched the RTX 20 series (with the GTX 16 series a year later to hit the low- and medium-end market).

So, it won’t be inaccurate to expect the 40 series to hit the market in or around September this year. By all estimations, development has already started.

Reliable leak source kopite7kimi on Twitter had mentioned that “If nothing else, we will see 4090, 4080, and 4070 in 2022 Q3”. The 2022 Q3 means July-September, 2022. Greymon55 has specifically mentioned that Ada Lovelace is going to launch in September 2022.

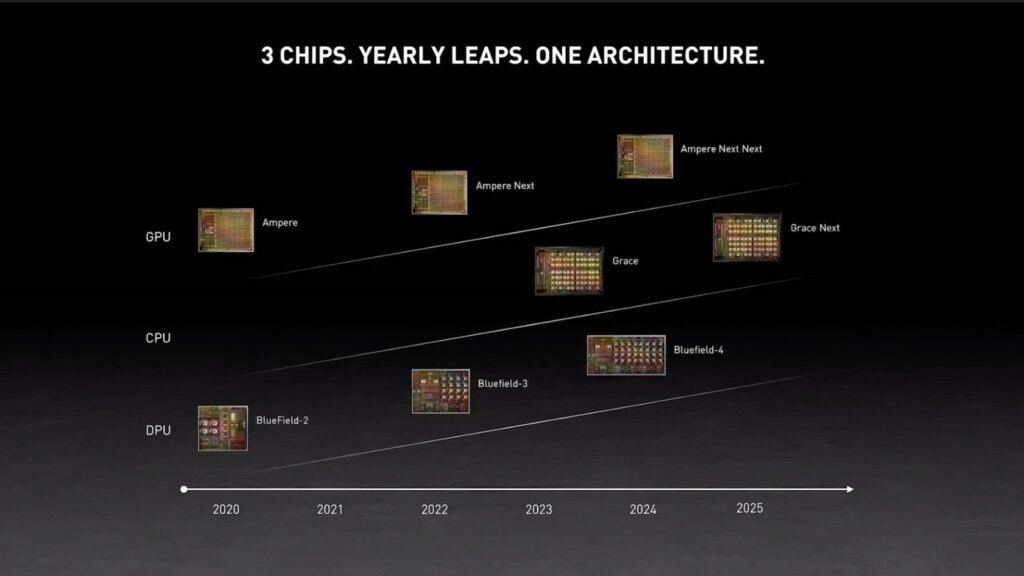

We can also comment that the “Next Next Ampere” architecture and the RTX 50 series will be launched in the ending months of 2024.

There is also a less popular rumor that Nvidia might pair the release of Lovelace GPUs with Grace in Q1 2023. To the uninitiated, Grace is Nvidia’s new CPU for high-performance computing. This data center CPU is supposed to work with Nvidia cards to remove bottlenecks, and not to directly compete with Intel Xeon or AMD EPYC processors. Grace will be central to supercomputers used in research. On a side note, we hope Grace meets with success and acceptance, being a specialized CPU rather than a general-purpose one (we all remember the catastrophe that Nvidia’s Project Denver was).

Specs

Ada Lovelace architecture based on TSMC’s 5nm process node will likely double the compute prowess of the RTX 40 series vis-à-vis RTX 30 series at every price point.

RTX 4090 and RTX 4080 will both be rocking the flagship AD102 die. The AD102 cards can be compared to the GA102 cards – the most powerful video card architecture right now. GA102 cards include RTX 3080 and 3090, RTX A6000, and A40 (data center GPU).

Key features of the GA102 architecture such as high FP32 processing, second-generation RT cores, third-generation Tensor cores, third-generation NVLink, and PCIe Gen 4 might become a thing of the past with the release of the AD102. The only thing that will remain relevant is the GDDR6X memory – as Lovelace is going to be using the same memory type as Ampere.

TechPowerUp has a detailed overview of the AD102 architecture. The die size is 600 sq. mm. which is large but smaller than GA102 (628 sq. mm.). The chip will also support (like GA102) DirectX 12 Ultimate (12_2), OpenCL 3.0, OpenGL 4.6, Vulkan 1.2, Shader Model 6.6, and PureVideo HD VP12. It will support CUDA 9.0 (vs. RTX 3090 that supports up until 8.6).

Compared to the GA102, the AD102 will have:

- 7,680 more CUDA cores (10752 vs. 18432)

- 30+ more teraflops (35.6 vs. 66) – doubtful, as other leaks suggest 90+ tflops

- 60 more RT cores (84 vs. 144)

- 5 more graphics processing clusters (7 vs. 12)

- 30 more texture processing clusters (42 vs. 72)

- 90MB more L2 cache (6MB vs. 96MB)

L2 cache is surely a monstrous jump. Nvidia is about to give all 40 series cards a beefy L2 cache. This means that the lower-end ones will get 32MB of it. In comparison, RTX 3060 right now only has 3MB of it. The RTX 4060, in effect, will have more L2 cache than RTX 3090 (6MB).

All this will demand significantly more power, of course. We are looking at a TDP of 450-600W for the RTX 4090. More on that in the power consumption section.

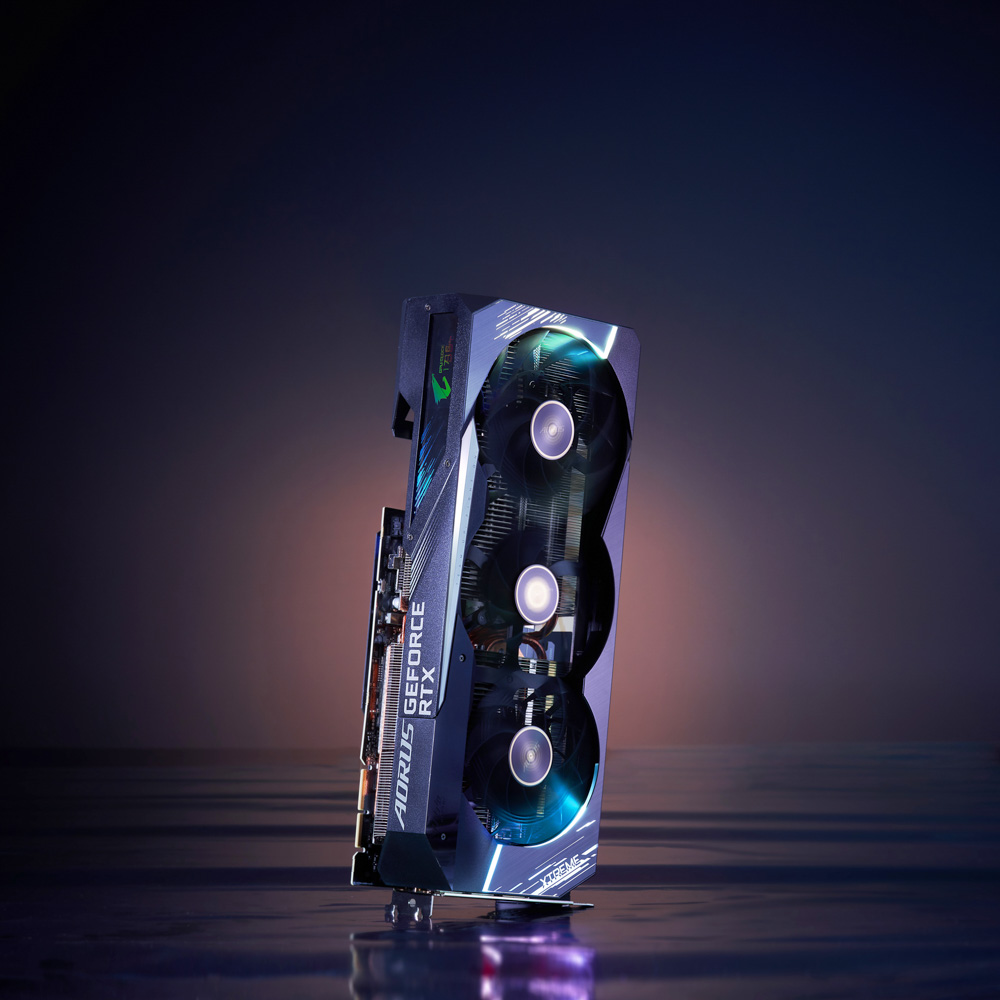

Of course, with higher specs and performance come higher thermals. Unless you want to invest heavily in liquid nitrogen or a submerged build, a quad-slot cooling solution such as the AORUS RTX 3090 Xtreme is likely the only option (shown below).

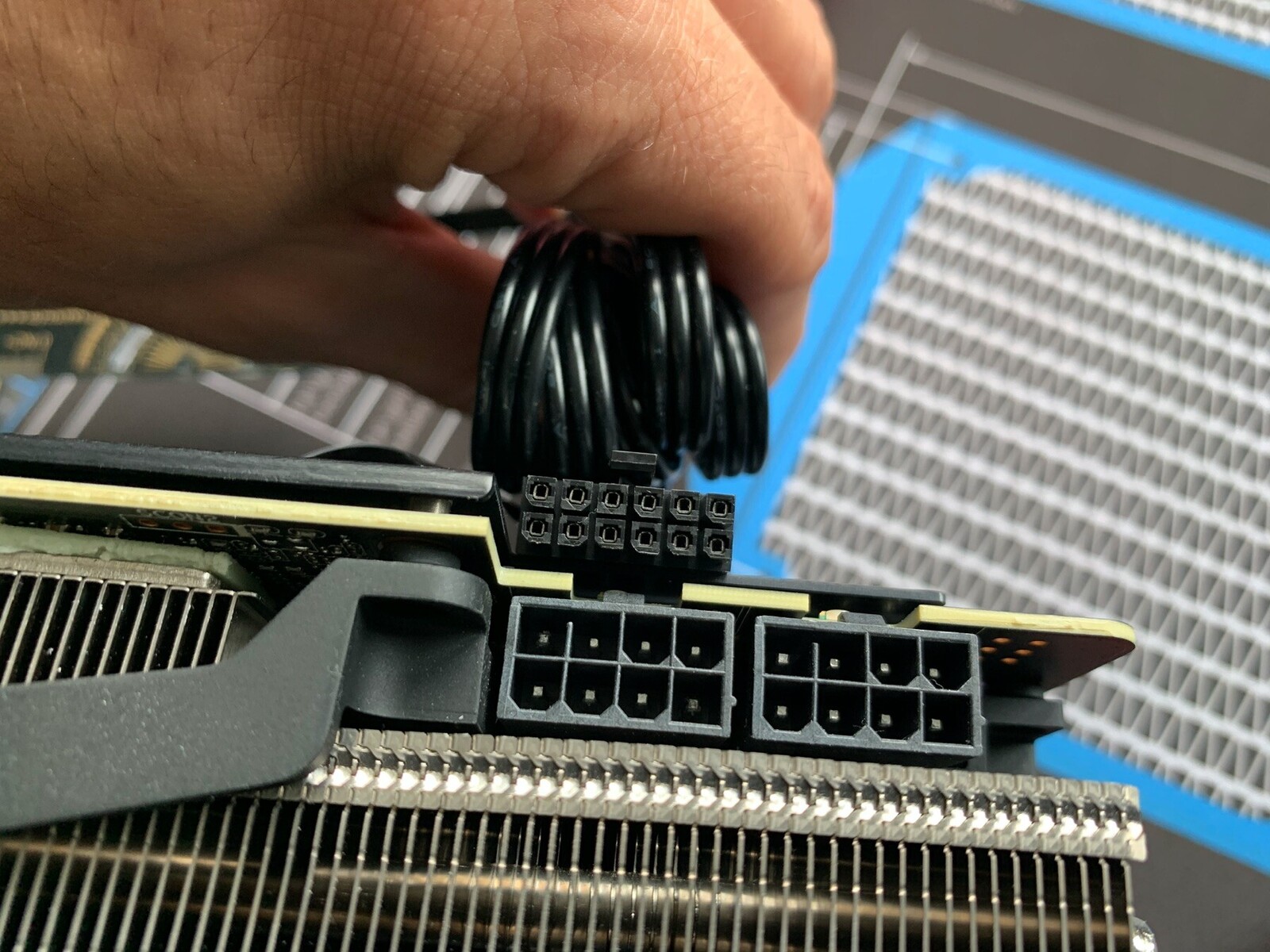

Board partners should prepare beforehand for cooling much like how PSU manufacturers are preparing for power delivery to the upcoming cards by providing PCIe 5.0 power. Though if rumors are to be believed, the RTX 4090 Ti will rock 800W, which means a single 600W 12-pin connector will be insufficient.

The Lovelace GPUs will easily range in 2.0 to 2.5Ghz speeds vs. the current 1.7 to 1.9Ghz speeds (clock and boost, respectively, though it is not specified whether these are peaks or averages). It’s important to note that AMD’s current series already offers a boosted 2.5Ghz on RDNA2, with RDNA3 in the works about to push it much further.

Pricing

We expect Nvidia to run in line with the current MSRPs and add 10-15% to it.

A 10-15% increase sounds about fair for double power and remarkably higher power consumption. However, just like performance, no pricing structures have been revealed by Nvidia either. If they stick close to the Ampere lineage, we are looking at these prices for the Nvidia RTX 40 series:

- RTX 4060 around $300-350

- RTX 4070 around $500-550

- RTX 4080 around $700-750

- RTX 4090 in the $1000-$1600 range

This is before we take into account that the 5nm TSMC node process can be costlier to Nvidia. If it is, feel free to add another 100 bucks to each estimated price point above.

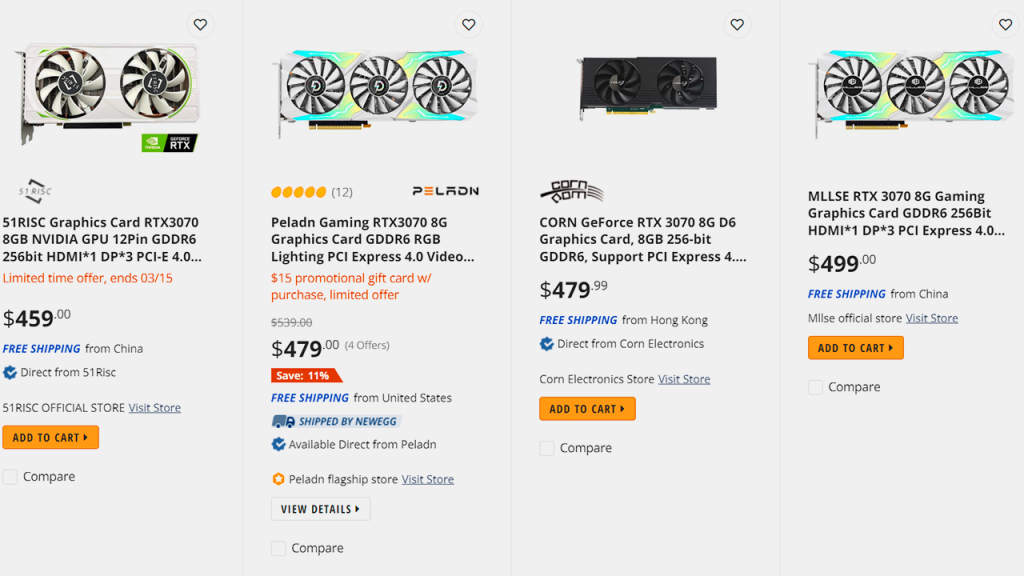

And of course, these are the MSRPs we are talking about. Seeing how $500 cards are being bought for $1000 on sites like Newegg and eBay, the secondhand or scalpers market won’t be forgiving to the RTX 40 series either. So, what are we looking at? Shelling $2000 for a 600W GPU, which requires you to upgrade the rest of your PC as well, becoming the new normal? Only time will tell but we sure hope not.

On the bright side, Nvidia might not see the high spending on GPUs by gamers lately as a cash cow. Combining that with Nvidia CFO Colette Kress confirming (full report on Tom’s Hardware) that the supply situation will improve in the second half of 2022, we can actually look at pretty competitive pricing for the RTX 40 series.

Add to that the report that Nvidia is lowering GPU cost by 8-12% for AICs, which directly passes to system integrators, and ultimately ends up as savings for gamers. Read Wccftech’s report for more.

In short, this news comes amid a war situation that can derail projections, but also at a time when Ethereum is close to being done with its Proof of Work consensus mechanism and eliminating mining, which will decrease GPU demand from miners by a lot even if a strong Ethereum successor surfaces soon afterward.

We have been seeing a price decline in GPUs for months now and there is a good chance that it will continue for the rest of 2022, indirectly impacting where the RTX 40 series is priced.

Power consumption

To surpass performance by 2x over RTX 3090, the 40 series flagship will draw a ton more power, and with no breakthrough power-saving technology, we’re looking at an absolutely power-hungry monstrosity in the upcoming RTX 4090.

A card TDP in the range of 450W to 600W vs. RTX 3090’s 350W is surely a big upgrade. Couple that with more cooling needs, and you easily need more power from the wall. You will also need a beefier PSU. Your older rails will not be enough under most circumstances.

- Greymon55 replied to a tweet about the Lovelace range and said that the AD102 GPUs will need a 1500W PSU. The TDPs can easily be well over 450W-600W in this case, and might actually reach highs of 850W. February 23, 2022,

- Kopite7kimi also mentioned that RTX 4090 can be a 600W TGP GPU while commenting that it’s too early to talk about it. March 12, 2022,

450W to 850W seems to be the ballpark range. But designs can change.

A video card’s cooling is designed first. If Nvidia sees that the TDPs are simply unintuitive, they might change the specs and lower the total power consumption. It is really too soon to talk about the actual power draw. In any case, for now, the RTX 4090 can easily have a 600W TGP and its Ti variant can easily go over 800W.

Keep in mind that board partners usually end up adding 20-30W on top GPUs with their own cooling systems.

The newer PCIe 5.0 12-pin connectors can deliver 600W of power now, so it makes sense to capitalize on this, but ultimately, it’s the gamers who will be paying the price in electricity costs.

It is worth noting that the TSMC 5nm process is 14% more power-efficient than the 7nm node. Ampere uses Samsung’s 8nm process node for the RTX 30 series (and TSMC’s 7nm for professional GPUs such as the A100), so a comparison cannot be made directly with RTX 30 series in this regard.

GPUs

So far, we are sure of the RTX 4080, 4080 Ti, and 4090 GPUs using the AD102 architecture and the RTX 4050, 4060, and 4070 GPUs using AD160, AD104, and AD103 architectures respectively.

Nvidia GeForce RTX 4080, 4080 Ti, and 4090 will be the new high-power GPUs.

- RTX 4050: 8GB VRAM, 3840 shaders, 120 TMUs, 48 ROPs, 128-bit bus

- RTX 4060: 12GB VRAM, 5888 shaders, 185 TMUs, 64 ROPs, 192-bit bus

- RTX 4070: 12GB VRAM, 9728 shaders, 304 TMUs, 112 ROPs, 192-bit bus

- RTX 4080: 16GB VRAM, 14080 shaders, 440 TMUs, 176 ROPs, 256-bit bus

- RTX 4080 Ti: 20GB VRAM, 16128 shaders, 504 TMUs, 176 ROPs, 320-bit bus

- RTX 4090: 24GB VRAM, 17408 shaders, 544 TMUs, 192 ROPs, 384-bit bus

The RTX 4090 is going to feature a triple-slot cooling solution at least.

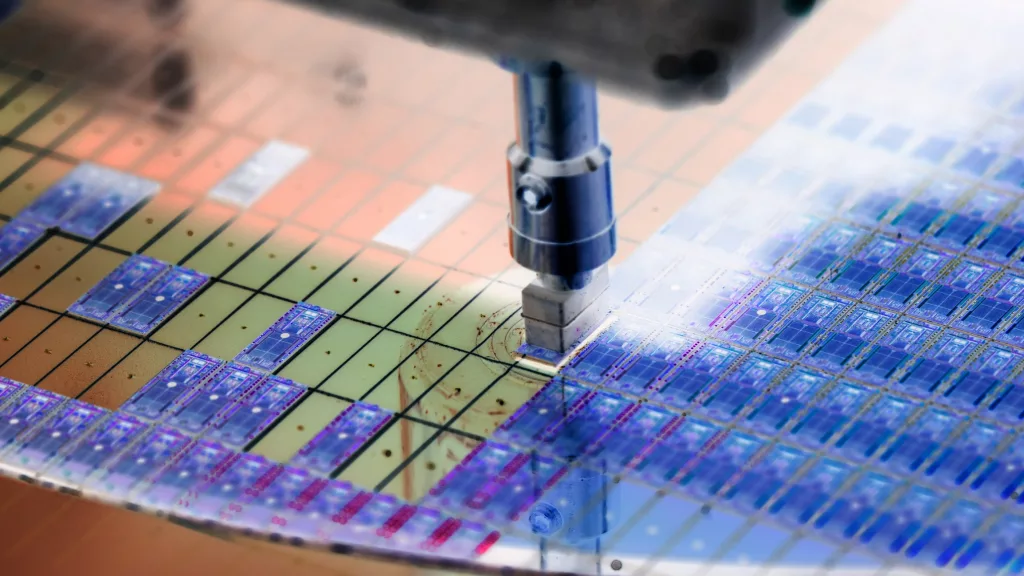

TSMC’s 5nm process supply

We believe Team Green has finally learned from the past and hopefully, this time the supply shortage will be much smaller, or less painful.

Nvidia had already spent around $9 billion in prepayments and inventory purchases. A majority of it is speculated to be for the RTX 40 series. This was first spotted in Nvidia’s last Q4 report.

Reserving the process node capacity is a critical task with the silicon shortage we are going through in a time where everyone uses devices using semiconductor chips.

TSMC and Samsung are the only two manufacturers producing 5nm MOSFET nodes, and reservations are already made by smartphone and electronics manufacturers such as Marvell, Huawei, Apple, and Qualcomm.

TSMC’s 5nm process node increases yields and performance by decreasing the defect density. The lower the defect density, the more “good silicon” will be left during the fabrication process. TSMC is pretty good at lowering the defect density, and the 5nm process is actually going better than 7nm’s improvement in defect density.

TSMC’s 5nm manufacturing process is already underway mass production (high volume production) and smartphone manufacturers will be the first ones to come out with products having this new technology with far lower defect densities.

Note that nothing in the whole 5nm silicon fabrication process is actually 5 nanometers. The names 5nm, 7nm, 8nm, etc. are merely marketing terms used to define something better and improved by denoting it to be “smaller”. In silicon electronics and circuits, smaller parts mean more performance because the same wafer can hold more transistors, for example.