AI has taken the world by storm. Everyone is talking about it. More specifically, people are either scared of or experimenting extensively with generative AI tools to create text and images. There are also many reports of AI- and ML-based robots, computer vision applications, entertainment applications, healthcare solutions, and on and on.

Background

Basically, artificial intelligence has always been with us. The chess games against the computer are actually against a chess AI, for example. Mostly, an AI program is meant to do one task really well—Often better or faster than humans. It’s trained on heaps of patterns to analyze probable outcomes. But what if machines like computers were given programs that could do general thinking, update their own code, and set their own instructions and limitations? That’s the scarier future and is called artificial general intelligence, or AGI.

Today, companies are leveraging AI left, right, and center. From agencies using it to create ad copy and make SEO plans for clients to almost all the leading IT and consultancy firms in the world providing a way for business clients to incorporate AI into their workflows (to possibly cut rote task jobs). And it’s not just the big names like IBM, Deloitte, or Accenture. Even smaller firms are helping businesses find out ways to incorporate generative AI in some capacity, like a firm I recently learned about called https://txidigital.com/.

Well, people are fighting against this as well. The fears of AI’s impact and the pressure from the US and European governments are certainly getting some work done. For example, big tech companies formed this AI safety body called the Frontier Model Forum (check the announcement here).

So, when AI is doing so much, why should games be left behind? Game developers are using AI to make more interesting NPCs now. Just the other day when I was playing New World (which I seem to be doing more often than I expected), I saw three NPCs programmed to mimic real humans talking. More specifically, one guy was telling a joke (there was no sound, but you could see by the mannerisms) and the other two were apparently laughing).

The reactions of the other two were aligned with that of the one who was telling the jokes. But this is just more realistic programming. There’s no real AI here. The real breakthrough is with an Unreal Engine 5 plugin.

Unreal Engine 5’s AI NPCs

Replica Studio’s Smart NPC is an AI-based experience that you can interact with. It’s like having your own Matrix world. There are already many videos covering it, and here’s one I recommend to fully understand what it means:

Well, if you’ve used ChatGPT you know the responses are based on the training data of the model so they are not super personalized. But still, if this is the beginning, one only wonders how realistic actual NPCs in actual games are going to be.

Maybe they will initiate the conversation? They will remember things for longer? Maybe some might even become your buddies as you explore a city around in a game like GTA. Who knows.

Here’s the official blog post by Replica for their UE5 plugin.

In another piece of news, a Chinese game developer called NetEase is rolling out a game called Justice Online Mobile that uses a large-language model (like the one ChatGPT uses) to offer real-time dialog generation and unique reactions from characters. The model is trained on relevant data on the period that the game is set in, so the responses will certainly be apt and colorful (check the news here).

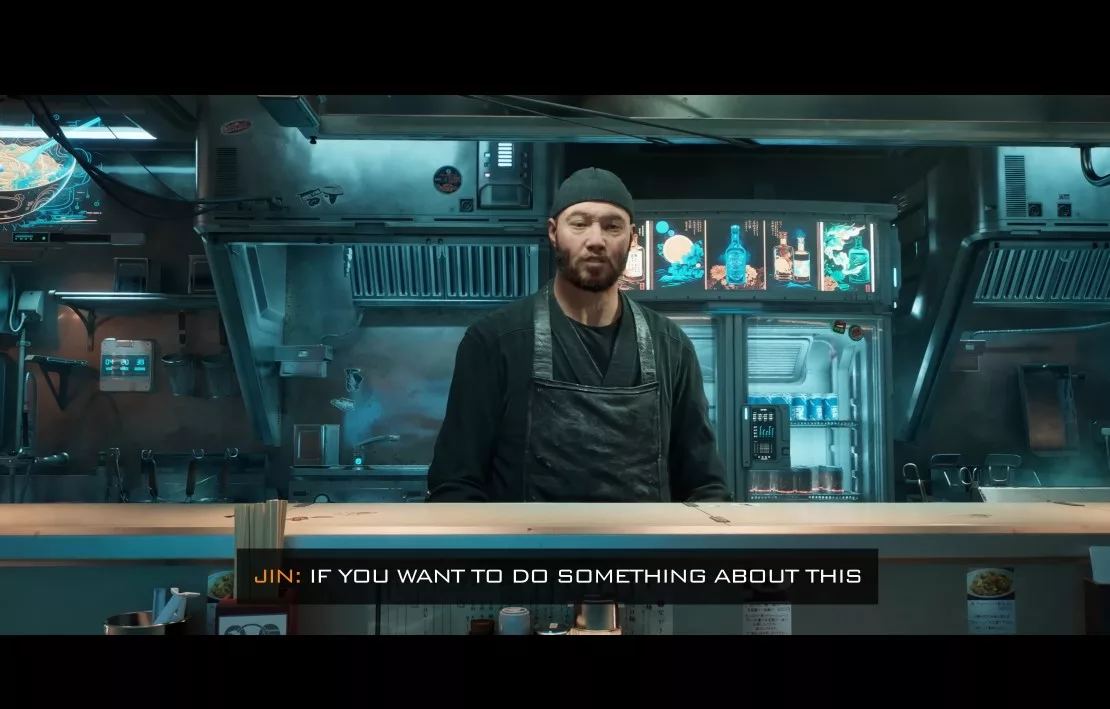

In yet another piece of news, Nvidia showed interaction with Cyberpunk’s NPCs. This is not just a dialog selection gimmick. This is a full-blown conversation you can initiate with anybody and put your own dialogs, to which they will respond (coverage here).

Even Sony is working on AI to create computer-controlled characters that are more than linear and predefined. Reportedly, they are working on characters that can “be a player’s in-game opponent or collaboration partner” (coverage here). To me, if Green from Pokémon becomes an AI NPC it will all be worth it to replay Red.

Asset creation with AI

Generative AI for conversations with NPCs or dialog options from game characters is actually quite simple to set up. But just like how the Hollywood producers allegedly wanted to get rid of background actors entirely by hiring and shooting them once to reuse their digital persona or “likeness” for use indefinitely, without pay (what?!), game studios are also money-hungry and want to cut their costs in every way possible.

Even the first episode from the latest Black Mirror season showed this. Can’t say we haven’t been warned now.

Back to the topic—Creating 3D assets that make up their games using only AI seems to be a great way to fire game artists or not hire them for a new project in the first place. My own friends are in the game industry and they make everything from guns to environments and it takes months.

Now, Unity has this big idea of creating “incredible experiences with real-time 3D” that has shot up the stock value of the company by a lot. Here’s their official page on AI, seems like the program is currently in beta.

AI tools can already do programming. They are competent. Windows is launching the Copilot app, GitHub has one, and Google also released this experiment called IDX which is a full-stack environment (from ideation to deployment). All of these tools allow current developers to become faster. They don’t replace them altogether.

But the bottom line is that with the uncanny speed at which AI programming is getting better (converting an image into 3D is more or less programming), it’s only a matter of time before AI can be used to program game levels or generate 3D models with just images.

Image to 3D and AI game programming are already topics of focus and a lot of companies are investing heavily in this stuff. After all, it gives you the ability to hire fewer people to do the same job.

Initially, we will be working with AI, most likely. Remember anime for a moment. Key artists make the main frames and junior animators fill in the in-between slides or do the coloring. What if this job, in the context of designing and developing a game, is taken up by AI? Lead animators or modeling, texturing, lighting, and design artists will focus on key things. The rest will be handled by AI and not junior artists.

Where do we go from here?

Well, AI is here to stay. As much as I hate to admit it, it’s meaningless to try and fight the proliferation of a technology that can help corporates cut costs. Corporations just don’t care about your jobs.

There are already legal battles for copyright issues that we haven’t even touched upon. Given a model is trained on copyrighted material, and then produces a piece of work, should all images used get credited? Well, the problem is that you can’t know what exact references were even used to generate the output (black box problem).

Art copyright issues aside, should we just embrace AI and go along with it? Believe in these safety bodies and regulatory bodies to protect our best interests? Honestly, I don’t know.